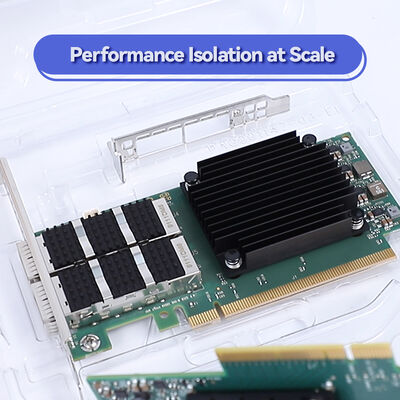

آداپتور NVIDIA ConnectX-7 MCX755106AS-HEAT 400G InfiniBand – تک پورت NDR، PCIe 5.0، امنیت و ذخیرهسازی با شتاب سختافزاری برای بارهای کاری مقیاسپذیر

جزئیات محصول:

| نام تجاری: | Mellanox |

| شماره مدل: | MCX755106AS-HEAT (900-9X7AH-0078-DTZ) |

| مدرک: | Connectx-7 infiniband.pdf |

پرداخت:

| مقدار حداقل تعداد سفارش: | 1 عدد |

|---|---|

| قیمت: | Negotiate |

| جزئیات بسته بندی: | جعبه بیرونی |

| زمان تحویل: | بر اساس موجودی |

| شرایط پرداخت: | T/T |

| قابلیت ارائه: | تهیه توسط پروژه/دسته |

|

اطلاعات تکمیلی |

|||

| مدل شماره: | MCX755106AS-HEAT (900-9X7AH-0078-DTZ) | پورت ها: | 2-پورت |

|---|---|---|---|

| تکنولوژی: | بی نهایت | نوع رابط: | OSFP56 |

| مشخصات: | 16.7cm x 6.9cm | مبدا: | هند / اسرائیل / چین |

| نرخ انتقال: | 200 گرم | رابط میزبان: | gen3 x16 |

| برجسته کردن: | آداپتور InfiniBand NVIDIA ConnectX-7,کارت شبکه 400G InfiniBand,آداپتور PCIe 5.0 Mellanox,400G InfiniBand network card,PCIe 5.0 Mellanox adapter |

||

توضیحات محصول

آداپتور تک پورت 400Gb/s با عملکرد بالا برای شبکه های InfiniBand NDR و 400GbE با ویژگی های PCIe 5.0 x16، امنیت سخت افزاری داخلی (IPsec/TLS/MACsec) ، موتورهای NVIDIA In-Network Computing،و NVMe-oF برای AI، HPC و مراکز داده های شرکت.

- پورت واحد QSFP112 که از 400Gb/s InfiniBand (NDR) و 400/200/100/50/25/10GbE پشتیبانی می کند

- PCIe Gen 5.0 x16 (با Gen 4.0/3.0 سازگار است)

- تخلیه سخت افزاری: هدف / ابتکار کننده NVMe-oF، رمزگذاری 256/512 بیتی XTS-AES، مطابقت برچسب های MPI

- موتورهای امنیتی داخلی: IPsec، TLS 1.3، MACsec با AES-GCM 128/256-bit

- عامل فرم PCIe نصف ارتفاع نصف طول (HHHL) ، مطابق با RoHS، زمان بندی پیشرفته (PTP/SyncE)

- 400 گيگابايت در ثانيه:پورت تک کار با سرعت تا 400Gb/s InfiniBand (NDR) یا 400GbE با پهنای باند دو طرفه کامل.

- محاسبات درون شبکه:عملیات جمعی (MPI، NCCL، SHMEM) را با استفاده از فناوری NVIDIA SHARP از کار می برد.

- امنيت درون خط:رمزگذاری و رمزگشایی سخت افزاری برای IPsec، TLS 1.3، و MACsec در سرعت خط؛ راه اندازی امن با root-of-trust.

- NVMe-oF تخلیه:هدف و آغازگر برای NVMe از طریق پارچه ها (از جمله NVMe / TCP) ، کاهش بهره برداری از CPU.

- زمانبندی دقیق:IEEE 1588v2 PTP با دقت 12ns، SyncE و PPS قابل تنظیم در / خارج.

MCX755106AS-HEAT یکپارچه می شودNVIDIA در شبکه محاسباتموتورها (SHARP)RDMA (IBTA 1.5),RoCEوNVMe-oF. ازش حمایت می کنهPCIe Gen 5.0 (x16),PAM4 (100G) و NRZ (10G/25G)SerDes، و ویژگی های پیشرفته مانندحمل و نقل متحرک متصل (DCT),صفحه نمایش بر اساس تقاضا (ODP)ومسیر سازگاری. بارگذاری های پوششی برای VXLAN ، GENEVE ، NVGRE توسط سخت افزار تسریع می شوند. مطابق با مشخصات IEEE 802.3ck ، 802.3bj و انجمن تجارت InfiniBand.

ConnectX-7 وظایف ارتباطی، ذخیره سازی و امنیتی را از CPU میزبان به سخت افزار آداپتور منتقل می کند. برای مجموعه های MPI، آداپتور داده های حمل و نقل را با استفاده از SHARP پردازش می کند،کاهش ترافیک نقطه پایانیبرای ذخیره سازی، دستورات NVMe-oF به طور مستقیم بر روی آداپتور پردازش می شوند و هسته های CPU را آزاد می کنند. موتورهای رمزنگاری اینلاین (IPsec / TLS / MACsec) بسته ها را بدون دخالت CPU با سرعت سیم رمزگذاری / رمزگشایی می کنند.نتیجه این استتاخیر کمتر، نرخ پیام بالاتر و مقیاس پذیری برنامه بهبود یافتهبرای محیط های 400G ضروری است.

- گره هاي آموزش هوش مصنوعی:ارتباطات GPU به GPU با GPUDirect RDMA و NCCL.

- گره های محاسبات HPC:شبیه سازی مبتنی بر MPI که نیاز به تاخیر بسیار کم و سرعت پیام بالا دارد.

- مخزن NVMe-oF:هدف/شروع کننده برای دسترسی به ذخیره سازی NVMe با عملکرد بالا.

- سرورهای ابری امن:IPsec/TLS اینلاین برای امنیت چند مستاجر بدون CPU overhead

- تجارت مالی:زمانبندی دقیق PTP برای تجارت فرکانس بالا و زمانبندی.

| مدل | پورت ها و سرعت | رابط میزبان | فاکتور فرم | حمل و نقل امنیتی | پروتکل ها | OPN |

|---|---|---|---|---|---|---|

| ConnectX-7 | 1x QSFP112 (400Gb/s NDR/400GbE) | PCIe 5.0 x16 | PCIe HHHL | IPsec، TLS 13، MACsec، AES-XTS | InfiniBand، Ethernet، NVMe-oF | MCX755106AS-HEAT |

| ConnectX-7 | 2x QSFP112 (400Gb/s) | PCIe 5.0 x16 | PCIe HHHL | IPsec/TLS/MACsec | IB/Eth | MCX75310AAS-NEAT |

| ConnectX-7 | 1x QSFP112 (200Gb/s) | PCIe 5.0 x16 | OCP 30 | IPsec/TLS/MACsec | IB/Eth | MCX755106AS-HEAT (OCP) |

توجه: MCX755106AS-HEAT از 400Gb / s InfiniBand (NDR) و 400/200/100/50/25/10GbE پشتیبانی می کند. ابعاد: 167.65mm x 68.90mm (HHHL). شامل براکت های بلند و کم پروفایل است. مصرف برق < 20W معمولی.

- در مقابل ConnectX-6:دو برابر پهنای باند (400 گیگابایت در ثانیه در مقابل 200 گیگابایت در ثانیه) ، PCIe 5.0، IPsec/TLS/MACsec، و PTP پیشرفته با دقت 12ns.

- در مقابل شرکت های ملی رقابتی:تخلیه سخت افزاری واقعی برای NVMe-oF، گروه های MPI و مجموعه امنیتی کامل همه در نرخ خط.

- بهره وری از یک بندر:ایده آل برای گره های برگ که در آن دو پورت مورد نیاز نیست، کاهش هزینه و قدرت.

- امنیت یکپارچه:نیاز به دستگاه های رمزنگاری خارجی را از بین می برد. مطابقت FIPS آماده است.

ما مشاوره فنی 24/7، خدمات RMA و پشتیبانی ادغام برای آداپتورهای ConnectX-7 را ارائه می دهیم. هر کارت با یک گارانتی 1 ساله پشتیبانی می شود (ممکن است تمدید شود).تیم ما برای توزیع های اصلی لینوکس (RHEL) تأیید درایور ارائه می دهد، اوبونتو) ، ویندوز سرور و VMware. پشتیبانی پیکربندی پیش فروش برای طراحی پارچه NDR InfiniBand در دسترس است. تمام کارت ها از موجودی 10M + ما با ارسال همان روز ارسال می شوند.

الف:بله، کاملا با سوئیچ های NVIDIA Quantum-2 QM9700/QM9790 با استفاده از حالت NDR با سرعت 400 گیگابایت در ثانیه سازگار است.

الف:بله، از پروتکل های InfiniBand و Ethernet پشتیبانی می کند. نرم افزار اتوماتیک نوع سوئیچ را تشخیص می دهد و حالت مناسب را تنظیم می کند.

الف:بله، ConnectX-7 به طور کامل از RoCE پشتیبانی می کند و RDMA با تاخیر کم را در محیط های اترنت فراهم می کند.

الف:موتورهای سخت افزاری اینلاین برای IPsec (AES-GCM 128/256) ، TLS 1.3، MACsec و رمزگذاری 256/512 بیتی سطح بلوک XTS-AES. همچنین دارای بوت امن با روت اعتماد سخت افزاری است.

الف:بله، با اسلات های PCIe Gen 4.0 و Gen 3.0 سازگار است، اگرچه پهنای باند به ظرفیت اسلات محدود می شود (تقریباً 200 گیگابایت در ثانیه در Gen 4.0).

- الزامات اسلات PCIe:برای عملکرد کامل 400 گیگابایت در ثانیه، در یک اسلات PCIe Gen 5.0 x16 نصب کنید. اسلات Gen 4.0 خروجی را به ~ 200 گیگابایت در ثانیه محدود می کند.

- خنک کننده:اطمینان از جریان هوا مناسب در شاسی سرور؛ خنک سازی منفعل حداقل 300 LFM را در عملیات 400G نیاز دارد.

- کابل کشی:استفاده از QSFP112 ماژول های مس غیرفعال/فعال یا ماژول های نوری برای 400Gb/s (NDR).

- پشتیبانی راننده:از آخرین NVIDIA MLNX_OFED برای لینوکس یا WinOF-2 برای ویندوز استفاده کنید.

- دمای کار:از 0°C تا 70°C؛ بین -40°C تا 85°C نگه دارید.

با بیش از یک دهه تجربه، ما یک کارخانه بزرگ را تحت حمایت یک تیم فنی قوی اداره می کنیم.پایگاه گسترده مشتریان و تخصص حوزه ما ما را قادر به ارائه قیمت های رقابتی بدون به خطر انداختن کیفیتبه عنوان توزیع کنندگان مجاز برای Mellanox، Ruckus، Aruba، و Extreme، ما سوئیچ های شبکه اصلی، راه حل های کارت شبکه (نیک کارت) ، نقاط دسترسی بی سیم، کنترل کننده ها و کابل کشی را ذخیره می کنیم.ما ذخيره 10 ميليون دلاري را حفظ مي کنيم تا تامين سريع محصولات مختلف را تضمین کنيمهر محموله براي دقتش تاييد ميشه و ما 24 ساعته مشاوره و حمايت فني ارائه ميديمتیم های فروش و فنی حرفه ای ما شهرت بالایی در بازارهای جهانی کسب کرده اند.