کارت شبکه اینفینیباند NVIDIA MCX555A-ECAT با سرعت 100 گیگابیت بر ثانیه، تک پورت QSFP28، PCIe 3.0 x16 ConnectX-5

جزئیات محصول:

| نام تجاری: | Mellanox |

| شماره مدل: | MCX555-ECAT |

| مدرک: | CONNECTX-5 infiniband.pdf |

پرداخت:

| مقدار حداقل تعداد سفارش: | 1 عدد |

|---|---|

| قیمت: | Negotiate |

| جزئیات بسته بندی: | جعبه بیرونی |

| زمان تحویل: | بر اساس موجودی |

| شرایط پرداخت: | T/T |

| قابلیت ارائه: | تهیه توسط پروژه/دسته |

|

اطلاعات تکمیلی |

|||

| وضعیت محصولات: | سهام | برنامه: | سرور |

|---|---|---|---|

| وضعیت: | نو و اصل | تایپ کنید: | سیمی |

| حداکثر سرعت: | edr و 100gbe | کانکتور اترنت: | QSFP28 |

| برجسته کردن: | آداپتور اینفینیباند NVIDIA ConnectX-5,کارت شبکه 100 گیگابیت بر ثانیه QSFP28,کارت Mellanox PCIe 3.0 x16,100Gb/s QSFP28 network card,PCIe 3.0 x16 Mellanox card |

||

توضیحات محصول

آداپتور شبکه 100 گیگابیت بر ثانیه با کارایی بالا و تأخیر کم، طراحی شده برای مراکز داده HPC، هوش مصنوعی و ابری. دارای قابلیتهای تخلیه پیشرفته از جمله NVMe over Fabrics، GPUDirect RDMA و تطابق تگ برای بارهای کاری MPI - ارائه توان عملیاتی پیشرو در صنعت و بهرهوری CPU.

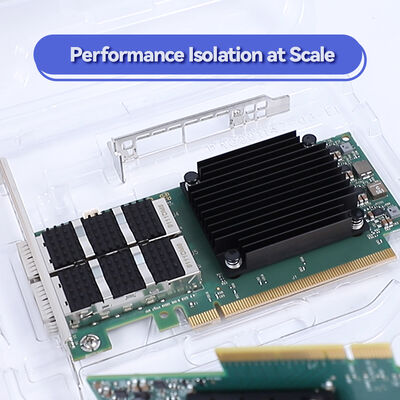

کارت آداپتور InfiniBand تک پورت 100 گیگابیت بر ثانیه NVIDIA ConnectX-5 MCX555A-ECAT در فرم فاکتور PCIe کمارتفاع. با بهرهگیری از معماری اثبات شده ConnectX-5، تا توان عملیاتی 100 گیگابیت بر ثانیه با تأخیر زیر میکروثانیه و نرخ پیام بالا را ارائه میدهد. این کارت از InfiniBand (تا EDR) و 100GbE پشتیبانی میکند و اتصال همهکارهای را برای محاسبات با کارایی بالا، ذخیرهسازی و محیطهای مجازی فراهم میکند.

ساخته شده با سوئیچ PCIe داخلی و قابلیتهای پیشرفته RDMA، MCX555A-ECAT وظایف ارتباطی حیاتی را از CPU تخلیه میکند - امکان عملکرد بالاتر برنامه، مصرف انرژی کمتر و کاهش کل هزینه مالکیت را فراهم میکند. این کارت کاملاً با اسلاتهای PCIe 3.0 x16 سازگار است و از طیف گستردهای از سیستمعاملها و چارچوبهای شتابدهنده پشتیبانی میکند.

- تا 100 گیگابیت بر ثانیه اتصال در هر پورت (InfiniBand EDR / 100GbE)

- کانکتور QSFP28 تک برای کابلهای نوری یا مسی

- رابط میزبان PCIe 3.0 x16 (به طور خودکار با x8، x4، x2، x1 مذاکره میکند)

- RDMA، معنای ارسال/دریافت با حمل و نقل قابل اعتماد مبتنی بر سختافزار

- تخلیه تطابق تگ و ملاقات برای MPI و SHMEM

- تخلیه هدف NVMe over Fabrics (NVMe-oF) برای ذخیرهسازی کارآمد

- شتابدهی GPUDirect RDMA (PeerDirect) برای ارتباط GPU

- کنترل ازدحام مبتنی بر سختافزار و پشتیبانی مسیریابی تطبیقی

- مجازیسازی SR-IOV: تا 512 تابع مجازی

- سازگار با RoHS، فرم فاکتور کمارتفاع (براکت بلند شامل شده است)

معماری ConnectX-5 مجموعهای از موتورهای شتابدهنده سختافزاری را ادغام میکند که مداخله CPU را کاهش داده و مقیاسپذیری برنامه را بهبود میبخشد:

- تخلیه تطابق تگ و ملاقات MPI: پردازش تطابق پیام و پروتکل ملاقات را تخلیه میکند و عملکرد MPI را برای خوشههای HPC به طور چشمگیری بهبود میبخشد.

- RDMA خارج از ترتیب با مسیریابی تطبیقی: استفاده کارآمد از چندین مسیر شبکه را در حالی که معنای تکمیل مرتب را حفظ میکند، امکانپذیر میسازد و استفاده از شبکه را به حداکثر میرساند.

- تخلیه هدف NVMe-oF: به سیستمهای ذخیرهسازی NVMe اجازه میدهد تا دسترسی از راه دور را با سربار CPU نزدیک به صفر ارائه دهند، که برای معماریهای ذخیرهسازی جدا شده ایدهآل است.

- حمل و نقل پویا متصل (DCT): با حذف سربار راهاندازی اتصال، مقیاسپذیری فوقالعادهای را برای سیستمهای محاسباتی و ذخیرهسازی بزرگ فراهم میکند.

- پردازش بستههای شتابدهنده و سوئیچینگ درخواستی (ASAP2): تخلیه سختافزاری برای Open vSwitch (OVS) و تونلزنی شبکه پوششی (VXLAN، NVGRE، GENEVE).

- صفحهبندی درخواستی (ODP): از صفحهبندی حافظه مجازی برای عملیات RDMA پشتیبانی میکند و توسعه برنامه را ساده میکند.

- محاسبات با کارایی بالا (HPC): ایدهآل برای خوشههای ابررایانه، شبیهسازیهای مبتنی بر MPI و بارهای کاری تحقیقات علمی که به تأخیر کم و نرخ پیام بالا نیاز دارند.

- آموزش هوش مصنوعی و یادگیری عمیق: همراه با GPUDirect RDMA، ارتباط سریع GPU به GPU را در سراسر گرهها امکانپذیر میسازد و زمان آموزش را تسریع میکند.

- سیستمهای ذخیرهسازی NVMe-oF: به عنوان اهداف ذخیرهسازی یا آغازگرها در محیطهای NVMe over Fabrics برای دسترسی به بلوک ذخیرهسازی با توان عملیاتی بالا و تأخیر کم مستقر کنید.

- مراکز داده ابری و مجازی شده: تخلیههای SR-IOV و مجازیسازی از محیطهای چند مستأجر با کیفیت خدمات تضمین شده و جداسازی امن پشتیبانی میکنند.

- معاملات فرکانس بالا (HFT): تأخیر فوقالعاده کم و برچسبگذاری زمانی سختافزاری (IEEE 1588v2) نیازهای برنامههای خدمات مالی را برآورده میکند.

MCX555A-ECAT برای سازگاری گسترده با سوئیچهای InfiniBand NVIDIA (مانند Quantum، Spectrum) و سوئیچهای 100GbE شخص ثالث طراحی شده است. این کارت از طریق پورتهای QSFP28 از کابلهای DAC مسی غیرفعال و کابلهای نوری فعال پشتیبانی میکند.

سیستمعاملها و پشتههای نرمافزاری:

- RHEL / CentOS، اوبونتو، ویندوز سرور، FreeBSD، VMware ESXi

- OpenFabrics Enterprise Distribution (OFED) / WinOF-2

- NVIDIA HPC-X، OpenMPI، MVAPICH2، Intel MPI، Platform MPI

- Data Plane Development Kit (DPDK) برای دور زدن هسته

| پارامتر | مشخصات |

|---|---|

| مدل | MCX555A-ECAT |

| فرم فاکتور | PCIe کمارتفاع (14.2 سانتیمتر × 6.9 سانتیمتر بدون براکت)، براکت بلند از پیش نصب شده، براکت کوتاه شامل شده است |

| سرعت و نوع پورت | 1x QSFP28، تا 100 گیگابیت بر ثانیه InfiniBand (EDR) و 100GbE |

| رابط میزبان | PCI Express 3.0 x16 (سازگار با x8، x4، x2، x1) |

| پشتیبانی InfiniBand | سازگار با IBTA 1.3، 100 گیگابیت بر ثانیه EDR، FDR، QDR، DDR، SDR؛ 8 خط مجازی + VL15؛ 16 میلیون کانال I/O |

| پشتیبانی اترنت | 100GbE، 50GbE، 40GbE، 25GbE، 10GbE، 1GbE؛ IEEE 802.3cd، 802.3bj، 802.3by، 802.3ba، 802.3ae |

| قابلیتهای RDMA | RDMA over Converged Ethernet (RoCE)، حمل و نقل قابل اعتماد سختافزاری، RDMA خارج از ترتیب، عملیات اتمی |

| تخلیههای ذخیرهسازی | تخلیه هدف NVMe over Fabrics، iSER، SRP، NFS RDMA، SMB Direct، انتقال امضای T10 DIF |

| مجازیسازی | SR-IOV (تا 512 تابع مجازی)، VMware NetQueue، NPAR، خدمات کنترل دسترسی PCIe (ACS) |

| تخلیههای CPU | تخلیه بدون حالت TCP/UDP/IP، LSO/LRO، تخلیه چکسام، RSS/TSS، درج/حذف برچسب VLAN/MPLS |

| شبکههای پوششی | تخلیه سختافزاری برای کپسولهسازی/کپسولهزدایی VXLAN، NVGRE، GENEVE |

| مدیریت | NC-SI روی MCTP، PLDM برای نظارت/کنترل و بهروزرسانی میانافزار، I2C، SPI، JTAG |

| بوت از راه دور | بوت از راه دور از طریق InfiniBand، اترنت، iSCSI؛ پشتیبانی UEFI، PXE |

| مصرف برق | به طور عمومی مشخص نشده است؛ محدوده معمول زیر 20 وات - لطفاً برای سیستم خود تأیید کنید |

| دمای عملیاتی | 0 درجه سانتیگراد تا 55 درجه سانتیگراد (محیط معمول) |

| انطباق | RoHS، REACH، FCC، CE، VCCI، ICES، RCM |

توجه: مشخصات برگرفته از مستندات محصول NVIDIA ConnectX-5. برای آخرین جزئیات و پشتیبانی میانافزار، به یادداشتهای انتشار رسمی NVIDIA مراجعه کنید.

| شماره قطعه سفارش | پورتها / سرعت | رابط میزبان | فرم فاکتور | ویژگیهای کلیدی |

|---|---|---|---|---|

| MCX555A-ECAT | 1x QSFP28، 100 گیگابیت بر ثانیه | PCIe 3.0 x16 | PCIe کمارتفاع | تک پورت استاندارد، EDR InfiniBand / 100GbE |

| MCX556A-ECAT | 2x QSFP28، 100 گیگابیت بر ثانیه | PCIe 3.0 x16 | PCIe کمارتفاع | دو پورت، EDR/100GbE |

| MCX556A-EDAT | 2x QSFP28، 100 گیگابیت بر ثانیه | PCIe 4.0 x16 | PCIe کمارتفاع | ConnectX-5 Ex، PCIe Gen4 پیشرفته |

| MCX556M-ECAT-S25 | 2x QSFP28، 100 گیگابیت بر ثانیه | 2x PCIe 3.0 x8 | سوکت مستقیم | اتصال سرور دو سوکته از طریق مهار |

| MCX545B-ECAN | 1x QSFP28، 100 گیگابیت بر ثانیه | PCIe 3.0 x16 | OCP 2.0 Type 1 | فرم فاکتور Open Compute Project |

برای انواع OCP یا چند میزبان، لطفاً با فروش تماس بگیرید. همه کارتها از سازگاری با سرعتهای پایینتر پشتیبانی میکنند.

- عملکرد برتر برنامه: تخلیههای سختافزاری برای MPI، NVMe-oF و پوششها، هستههای CPU را برای منطق کسبوکار آزاد میکنند.

- شبکه RDMA مقیاسپذیر: DCT، XRC و RDMA خارج از ترتیب، مقیاسپذیری خطی را برای هزاران گره ارائه میدهند.

- آماده شتابدهی GPU: GPUDirect RDMA دسترسی مستقیم حافظه بین GPUها و آداپتورهای شبکه را امکانپذیر میسازد و گلوگاههای CPU را در خوشههای هوش مصنوعی حذف میکند.

- استقرار انعطافپذیر: پورت QSFP28 تک، کابلکشی را ساده میکند و برای معماریهای ستون فقرات برگ 100 گیگابیت بر ثانیه ایدهآل است.

- حفاظت از سرمایهگذاری: پشتیبانی از InfiniBand و اترنت امکان انتقال یکپارچه بین پروتکلها را با تکامل نیازها فراهم میکند.

گروه Hong Kong Starsurge پشتیبانی کامل چرخه عمر را برای آداپتورهای NVIDIA ConnectX-5 ارائه میدهد، از جمله کمک به پیکربندی پیش از فروش، راهنمایی بهروزرسانی میانافزار و خدمات گارانتی. تیم فنی ما میتواند در موارد زیر کمک کند:

- تأیید سازگاری با زیرساخت سرور و سوئیچ شما

- تنظیم عملکرد برای بارهای کاری HPC یا ذخیرهسازی

- گزینههای براکت سفارشی و الزامات بستهبندی حجمی

- پردازش RMA و خدمات جایگزینی پیشرفته

برای اطلاعات قیمت حجمی و زمان تحویل با مهندسان فروش ما تماس بگیرید.

تخلیه الکترواستاتیک (ESD): هنگام کار با آداپتور همیشه از روشهای ایمن ESD استفاده کنید. تا زمان نصب در بستهبندی ضد الکتریسیته ساکن نگهداری کنید.الزامات خنککننده: از جریان هوای کافی در شاسی سرور اطمینان حاصل کنید تا دمای عملیاتی در محدوده مشخص شده حفظ شود.بهروزرسانی میانافزار: قبل از بهروزرسانی از ابزارهای رسمی میانافزار NVIDIA (MFT) استفاده کنید و سازگاری با نسخه سیستمعامل و درایور خود را تأیید کنید.خم شدن کابل: دستورالعملهای شعاع خم کابل QSFP28 را برای جلوگیری از افت سیگنال دنبال کنید.

این یک محصول کلاس A است. در محیط مسکونی ممکن است باعث تداخل رادیویی شود. اطمینان حاصل کنید که محافظت و زمینکشی مناسب مطابق با مقررات محلی انجام شده است.

تأسیس شده در سال 2008، Hong Kong Starsurge Group Co., Limited یک ارائهدهنده مبتنی بر فناوری سختافزار شبکه، خدمات IT و راهحلهای یکپارچهسازی سیستم است. با ارائه خدمات به مشتریان در سراسر جهان با محصولاتی از جمله سوئیچهای شبکه، NICها، نقاط دسترسی بیسیم، کنترلکنندهها و کابلکشی پرسرعت، Starsurge تخصص فنی عمیق را با رویکرد مشتریمدار ترکیب میکند. این شرکت از صنایعی مانند دولت، بهداشت، تولید، آموزش، مالی و سازمانی پشتیبانی میکند و راهحلهای IoT، سیستمهای مدیریت شبکه، توسعه نرمافزار سفارشی و تحویل جهانی چندزبانه را ارائه میدهد. با تمرکز بر کیفیت قابل اعتماد و خدمات پاسخگو، Starsurge به مشتریان کمک میکند تا زیرساخت شبکه کارآمد، مقیاسپذیر و قابل اعتماد بسازند.

| جزء / سیستم | وضعیت سازگاری | یادداشتها |

|---|---|---|

| سوئیچهای InfiniBand NVIDIA Quantum | تأیید شده | سازگاری EDR، HDR هنگام استفاده از میانافزار مناسب |

| سوئیچهای اترنت NVIDIA Spectrum | تأیید شده | حالتهای 100GbE، 50GbE، 25GbE پشتیبانی میشوند |

| سوئیچهای 100GbE شخص ثالث | سازگار | نیاز به انطباق با استانداردهای IEEE دارد؛ با فروشندگان اصلی آزمایش شده است |

| سرورهای GPU (NVIDIA DGX، HGX) | تأیید شده با GPUDirect | شتابدهی RDMA برای ارتباط چند GPU |

| آرایههای ذخیرهسازی با NVMe-oF | پشتیبانی شده | تخلیه هدف امکان دسترسی کارآمد به شبکه NVMe را فراهم میکند |

- ☑ تأیید کنید که سرور دارای اسلات PCIe 3.0 x16 (یا x8) در دسترس با فضای کافی است.

- ☑ تعداد پورت را تعیین کنید: تک پورت (MCX555A-ECAT) در مقابل دو پورت (MCX556A-ECAT).

- ☑ نوع کابل را انتخاب کنید: DAC مسی غیرفعال برای فواصل کوتاه (≤5 متر) یا نوری برای فواصل طولانیتر.

- ☑ پشتیبانی سیستمعامل و درایور را تأیید کنید (OFED، ویندوز، VMware).

- ☑ برای خوشههای GPU، سازگاری GPUDirect RDMA با مدل GPU و نسخه درایور خود را اطمینان حاصل کنید.

- ☑ بررسی کنید که آیا براکت بلند یا کوتاه برای شاسی سرور شما مورد نیاز است.

- NVIDIA MCX556A-ECAT – آداپتور ConnectX-5 دو پورت 100 گیگابیت بر ثانیه

- NVIDIA MCX556A-EDAT – ConnectX-5 Ex با پشتیبانی PCIe 4.0

- NVIDIA Quantum-2 QM9700 سوئیچ InfiniBand 40 پورت 800 گیگابیت بر ثانیه

- کابلهای DAC مسی غیرفعال Mellanox QSFP28 (1 متر، 2 متر، 3 متر)

- سوئیچهای اترنت NVIDIA Spectrum-4 SN5600 100GbE/400GbE

- راهنمای کاربر کارت آداپتور InfiniBand NVIDIA ConnectX-5

- راهنمای استقرار RDMA over Converged Ethernet (RoCE)

- بهترین شیوهها برای خوشههای هوش مصنوعی GPUDirect RDMA

- NVMe over Fabrics با ConnectX-5 – راهنمای پیکربندی

- راهنمای نصب و تنظیم OFED